The Tiny New Chip That Could Change Who Gets to Use AI

April 1, 2026

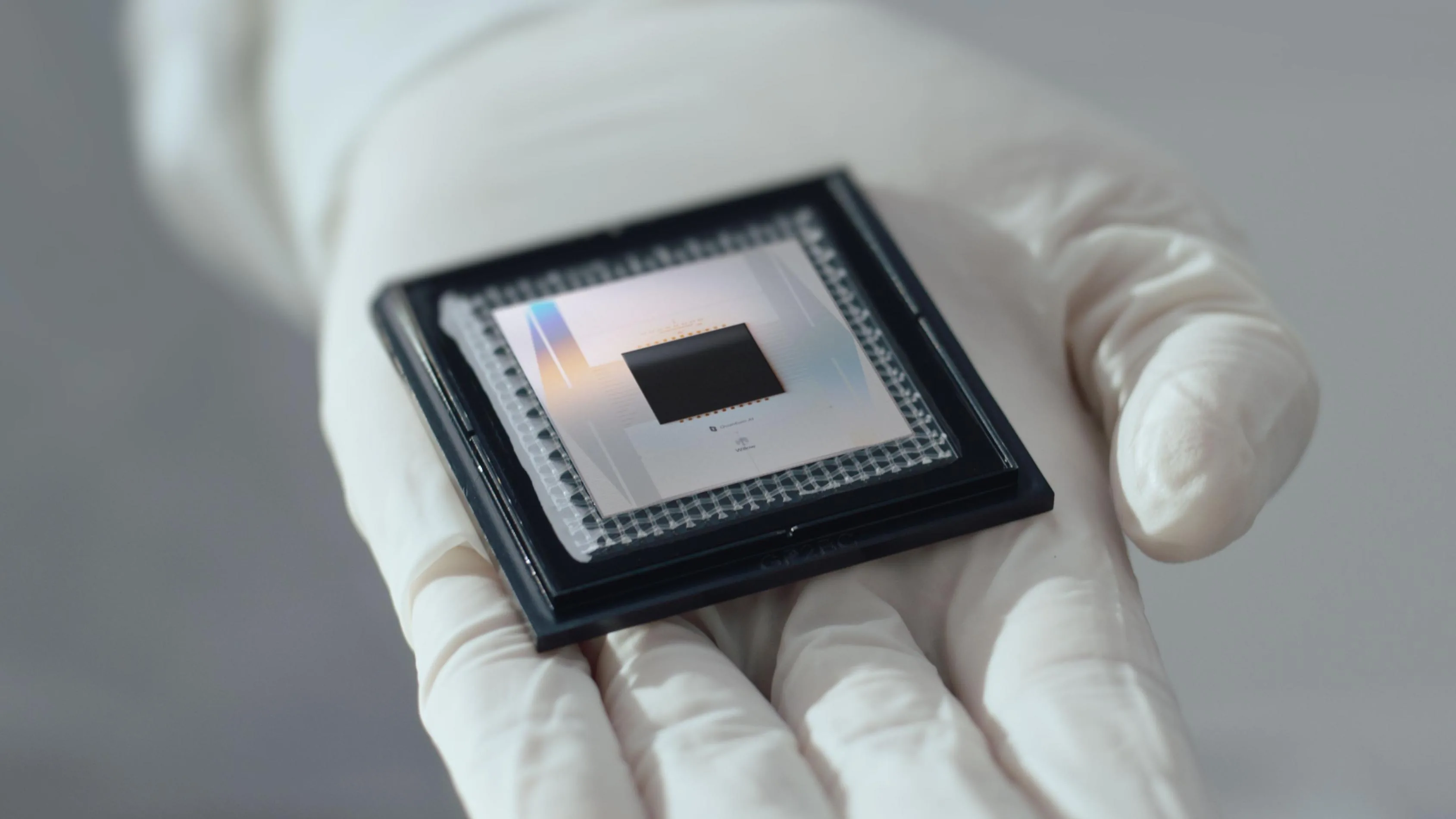

Many people still picture artificial intelligence as something that lives in giant data centers, fed by endless electricity and controlled by a few of the world’s largest companies. That image is still partly true. Training the biggest models requires vast computing power. But a very new invention is beginning to challenge that picture: tiny “edge AI” chips designed to run advanced machine-learning tasks directly on small devices. The shift sounds technical. In practice, it could change who gets to use powerful computing, where data goes, and which parts of daily life become less dependent on the cloud.

The most important fact is simple. Sending data back and forth to distant servers is often slow, expensive and power-hungry. It can also create privacy risks. That matters because more of modern life now depends on connected devices. Phones listen for voice commands. Cars track roads and pedestrians. Factory sensors watch for faults. Medical devices monitor speech, movement and heart rhythms. When every action depends on a remote server, weak internet service becomes a barrier. Costs rise. So do delays.

That is why chipmakers and device makers have spent the past few years racing to move more computing onto the device itself. This is no longer a niche experiment. Apple has pushed “neural engines” into its phones and laptops. Qualcomm markets AI-ready chips for mobile devices. Nvidia, Arm and Intel are all competing in parts of the edge computing market. Startups are going further, designing highly specialized chips that perform narrow AI tasks while using a fraction of the energy required by general-purpose processors. Analysts at Gartner have for years pointed to edge AI as a major technology trend, and industry forecasts from IDC and others have projected rapid growth in edge spending as companies try to process data closer to where it is created.

The new turn is not just that chips are getting faster. It is that they are becoming more efficient in surprisingly small formats. Researchers at universities and commercial labs have been developing neuromorphic and in-memory computing designs that reduce the need to move data between memory and processor, one of the biggest drains on power. IBM, Intel and several startups have published results showing that brain-inspired or memory-centered designs can sharply cut energy use for certain tasks such as pattern recognition and sensor analysis. The field is still young. Many prototypes are not ready for mass use. But the direction is clear: useful AI no longer has to mean a hot, expensive machine connected to a giant server farm.

The underlying cause is a hard physical limit in computing economics. For years, the industry improved performance by making chips smaller and packing in more transistors. That continues, but gains are harder and more costly. At the same time, AI workloads have exploded. The International Energy Agency has warned that data centers, especially those serving AI, are becoming a major source of electricity demand. The answer is not always to build ever larger centers. In many cases, it is smarter to avoid sending so much data there in the first place. If a wearable device can detect a fall, if a farm sensor can identify crop stress, or if a hearing aid can clean up speech in real time without cloud access, then local processing becomes more than a convenience. It becomes a design necessity.

There are already signs of what this changes in real life. In healthcare, researchers have tested edge AI tools that can flag irregular heart signals or monitor breathing patterns without transmitting every raw data point to a server. In rural areas, where broadband is uneven, that matters. The U.S. Federal Communications Commission has repeatedly shown persistent gaps in high-speed internet access, especially in remote regions. In manufacturing, local AI systems are being used to inspect parts on factory lines in milliseconds, reducing waste and downtime. In vehicles, speed is not optional. A car cannot wait for a distant server to decide whether an object in the road is a child, a bicycle or a plastic bag.

The privacy case is just as strong. Many consumers have grown uneasy about devices that constantly upload audio, video or health signals. Data breaches have made that fear rational. IBM’s annual data breach reports have consistently found that compromised personal data remains costly for companies and damaging for users. When more analysis happens on the device, less sensitive material needs to leave it. That does not solve privacy problems on its own. Bad software, weak security and poor business practices can still expose users. But keeping more computation local can reduce the amount of personal information circulating through corporate systems.

There is also a global access story here. Cloud AI depends on strong infrastructure, large server fleets and reliable payment systems. That model tends to favor rich companies and wealthy countries. Smaller, cheaper AI chips could lower that barrier. In India, parts of Africa and Latin America, where mobile adoption is high but fixed broadband can be limited or costly, useful local AI on low-cost phones or tools could widen access to translation, health screening, education support and crop advice. The digital divide will not disappear because of a better chip. But the design of hardware can either deepen inequality or ease it.

Still, the invention should not be romanticized. Tiny AI chips have real limits. They usually run smaller models. They may be excellent at one task and poor at another. Updating them can be difficult. Security becomes more important, not less, when intelligence is distributed across millions of devices. A weakly protected smart camera or medical sensor can become a target. The world has already seen what insecure internet-connected hardware can do. The Mirai botnet attack in 2016 turned poorly secured devices into a tool for major internet disruption. More capable edge devices will need stronger safeguards from the start.

That points to the policy and industry choices ahead. Companies should design edge systems with clear rules on what stays local, what gets uploaded and why. Regulators should press for plain-language disclosure, security updates and minimum device support periods. Public procurement can help too. Schools, hospitals and transport agencies can reward vendors that build efficient, privacy-conscious tools rather than systems that demand constant cloud dependence. Standards bodies and telecom regulators also have a role in making sure edge devices work reliably across networks and remain patchable over time.

The deeper lesson is easy to miss in the noise around AI. The most important new invention is not always the one with the biggest model or the loudest launch event. Sometimes it is a quieter piece of hardware that shifts power outward. Tiny AI chips will not replace the cloud. They do not end the need for large-scale computing. But they do offer a different path, one that could make advanced digital services faster, cheaper and more private for ordinary people. In technology, the future often looks massive until it suddenly becomes small enough to fit in your hand.